Your traffic dropped after Google's March 2026 Core Update — here's the one fix that actually works

Google's March 2026 Core Update knocked 24% of top-10 pages out of the top 100. Here's the one two-step fix practitioners are reporting results from: prune your weakest pages, then inject information gain into surviving pillar pages.

Google's March 2026 Core Update finished rolling out on April 8 — and if your site hasn't recovered in the six weeks since, there's a specific reason. This week's tip covers the one structural fix that practitioners are actually reporting results from, and how to tell if it's working.

What Google changed — and who got hit

The March 2026 Core Update ran from March 27 to April 8, 2026 (12 days), 1 and landed harder than any update in recent memory: SEMrush's Sensor peaked at 9.5 out of 10, and 24% of pages that were in the top 10 before rollout fell completely out of the top 100 by the time it finished. 2

The forum consensus — across WebmasterWorld, Reddit's r/SEO, r/bigseo, and SERoundtable — points to a single root cause: Google significantly raised its content quality threshold. 3 Three content patterns took the hardest hits:

- AI-generated content at scale — pages produced by automated pipelines with no human editorial layer

- Self-promotional listicles — pages where the publisher's own product is consistently ranked #1 across 200+ "best of" articles 4

- Broad thin pages — content covering a wide topic area without demonstrating genuine depth in any of it

The sites that gained or held steady had one thing in common: they published something no other top-10 result had — original data, a first-hand case study, or a distinct analytical angle. 5 Pages from sites with clear author credentials also held rankings better — 73% of YMYL (health, finance, legal) top-ranking pages now show explicit author qualifications. 6

Why the obvious fixes fail

Before getting to what works, it's worth naming the three responses that consistently don't.

Updating publish dates does nothing. Google detects when content was actually modified, not when the date field was changed. 2 Digital Applied reviewed dozens of recovery cases and found that "sites that make shallow cosmetic improvements — updating publish dates, adding a few sentences to existing pages, or reordering sections — consistently fail to recover." 5

Rewriting content with AI is worse. This update specifically targets AI behavioral patterns: covering every angle without taking a position, listing information without synthesizing it, never making a concrete recommendation. Running your hurt pages through an AI rewriter reproduces those exact patterns. 5

Building backlinks targets the wrong signal. Google's John Mueller has said that core updates are "more related to trying to figure out what the relevance of a site is overall" rather than link profiles. 7

The fix: consolidate pages, then add information gain

This is a two-step process. Most practitioners are reporting results from doing both steps in sequence.

Step 1: Remove or merge your weakest pages

Pull up Google Search Console and filter to the Pages view. Sort by absolute click loss over the past 60 days — not percentage drop, but raw clicks lost. Work through your bottom 20–30% of pages and make one of three decisions for each: 8

| Score (1–25) | Action |

|---|---|

| 5–10 (thin, no recovery path) | Remove: return a 410 or 301-redirect to a stronger page |

| 11–15 (overlaps with another page) | Consolidate: merge into your canonical page on this topic |

| 16–20 (has substance but needs work) | Rewrite: substantial overhaul, not cosmetic edits |

| 21–25 (already solid) | Enhance: add one new original data point |

The scoring itself: assign 1–5 points across five dimensions — topical depth, information gain relative to top-10 competitors, E-E-A-T signals, user experience, and technical health. A page scoring 5–10 overall has no realistic path to competing.

The reason deleting pages helps isn't counterintuitive once you understand how Google evaluates sites: quality signals are assessed at the domain level. A site with 80 strong pages and 20 thin pages will outperform the same 80 strong pages dragging 200 thin ones. 8 Marie Haynes observed this directly: a city guide site that noindexed its weakest YMYL-adjacent pages recovered rankings on its core pages within the following core update cycle. 9

One caution: don't bulk-delete everything that dropped. Lucid Media's Jason Poonia recommends auditing each page individually before removing it — deleting pages that have genuine depth but were caught in collateral volatility can hurt your topical authority signals. 10

Step 2: Add information gain to your remaining pillar pages

"Information gain" means your page contains at least one fact, data point, or insight that no other top-5 result for the same query has. After trimming, pick your 5–10 most important pages and ask that question about each one.

Google's VP of Search, Liz Reid, described exactly what's being rewarded now: "What people click on in AI Overviews is content that is richer and deeper. That surface-level AI-generated content, people don't want that, because if they click on that they don't actually learn that much more than they previously got." 9

For an indie developer, "information gain" doesn't require a research team. Practical options:

- Run a small test and publish the numbers — even a sample of 10 or 20 is something no content farm can reproduce

- Write from what you personally built or broke — your production incident, your failed experiment, your actual usage data

- Take a position — if every top result for a query hedges both sides, write the version that argues one side with reasons

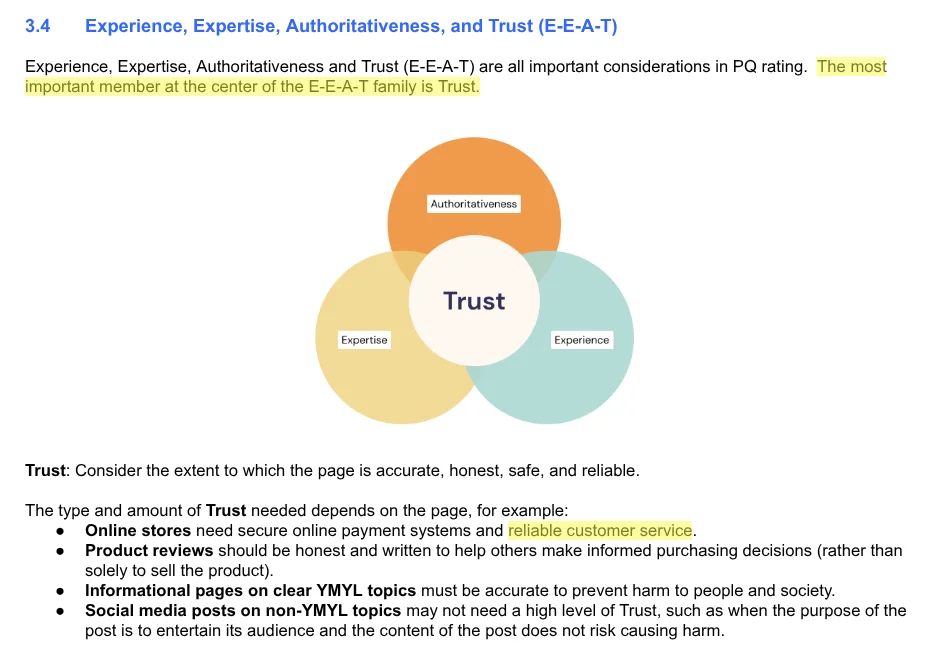

Alongside the content changes, add three E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals to each revised page: a named author with a one-paragraph bio linking to verifiable credentials (GitHub profile, LinkedIn, personal site), at least one original screenshot or photo proving first-hand experience, and one external citation to an authoritative source for any statistical claim. 5 10

How to tell if it's working

The signal to watch is weekly impressions in Search Console, not daily ranking positions. Impressions recover before clicks do — Google starts showing your improved page in results before users are clicking at the previous rate. A sustained upward trend in impressions over 4–6 weeks is the earliest reliable indicator that the fix is being noticed. 8

Use this comparison window: filter GSC Performance to March 15–26 (pre-update baseline) versus April 1–12 (first two weeks post-update). That pinpoints the pages whose drops are directly attributable to this update, not earlier drift. 11

The realistic timeline, per Lucid Media: "Recovery takes three to six months, not three to six weeks. Anyone telling you otherwise is selling you something." 10 The next core update — expected June or July 2026 — is typically when Google re-evaluates sites that made substantive changes. Pages you improve now need time to be re-crawled and re-assessed before that window. Start this week, not next month.

References

- 1Google Search Status Dashboard — March 2026 core update

- 2dataslayer — Google Core Updates 2026: Timeline, Changes and Recovery Playbook

- 3WebmasterWorld — May 2026 Google Search Observations

- 4SERoundtable — Is Google Hitting Self-Promotional Listicles Hard Again?

- 5Digital Applied — Surviving the March 2026 Core Update: Recovery Guide

- 6Ollie Limpkin — The Google March 2026 core update, what happened and what it means

- 7Style Factory Productions — How to Recover from a Google Core Update (2026)

- 8Digital Applied — March 2026 Core Update: Ranking Drop Recovery Plan

- 9Marie Haynes — The December 2025 core update: Observations on 4 sites that did well

- 10Lucid Media — Google March and April 2026 Core Updates: Why Your Traffic Dropped

- 11Fiza Chowdhury (LinkedIn) — B2B Site Recovery Playbook for Google's March 2026 Core Update

Add more perspectives or context around this content.